Benchmarks¶

Regroup typical EC benchmarks functions to import easily and benchmark examples.

| Single Objective Continuous | Multi Objective Continuous | Binary | Symbolic Regression |

|---|---|---|---|

cigar() |

fonseca() |

chuang_f1() |

kotanchek() |

plane() |

kursawe() |

chuang_f2() |

salustowicz_1d() |

sphere() |

schaffer_mo() |

chuang_f3() |

salustowicz_2d() |

rand() |

dtlz1() |

royal_road1() |

unwrapped_ball() |

ackley() |

dtlz2() |

royal_road2() |

rational_polynomial() |

bohachevsky() |

dtlz3() |

rational_polynomial2() |

|

griewank() |

dtlz4() |

sin_cos() |

|

h1() |

zdt1() |

ripple() |

|

himmelblau() |

zdt2() |

||

rastrigin() |

zdt3() |

||

rastrigin_scaled() |

zdt4() |

||

rastrigin_skew() |

zdt6() |

||

rosenbrock() |

|||

schaffer() |

|||

schwefel() |

|||

shekel() |

Continuous Optimization¶

-

deap.benchmarks.cigar(individual)[source]¶ Cigar test objective function.

Type minimization Range none Global optima \(x_i = 0, \forall i \in \lbrace 1 \ldots N\rbrace\), \(f(\mathbf{x}) = 0\) Function \(f(\mathbf{x}) = x_0^2 + 10^6\sum_{i=1}^N\,x_i^2\)

-

deap.benchmarks.plane(individual)[source]¶ Plane test objective function.

Type minimization Range none Global optima \(x_i = 0, \forall i \in \lbrace 1 \ldots N\rbrace\), \(f(\mathbf{x}) = 0\) Function \(f(\mathbf{x}) = x_0\)

-

deap.benchmarks.sphere(individual)[source]¶ Sphere test objective function.

Type minimization Range none Global optima \(x_i = 0, \forall i \in \lbrace 1 \ldots N\rbrace\), \(f(\mathbf{x}) = 0\) Function \(f(\mathbf{x}) = \sum_{i=1}^Nx_i^2\)

-

deap.benchmarks.rand(individual)[source]¶ Random test objective function.

Type minimization or maximization Range none Global optima none Function \(f(\mathbf{x}) = \text{\texttt{random}}(0,1)\)

-

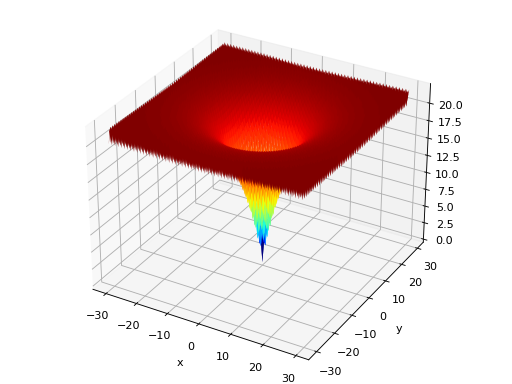

deap.benchmarks.ackley(individual)[source]¶ Ackley test objective function.

Type minimization Range \(x_i \in [-15, 30]\) Global optima \(x_i = 0, \forall i \in \lbrace 1 \ldots N\rbrace\), \(f(\mathbf{x}) = 0\) Function \[f(\mathbf{x}) = 20 - 20\exp\left(-0.2\sqrt{\frac{1}{N} \sum_{i=1}^N x_i^2} \right) + e - \exp\left(\frac{1}{N}\sum_{i=1}^N \cos(2\pi x_i) \right)\](Source code, png, hires.png, pdf)

-

deap.benchmarks.bohachevsky(individual)[source]¶ Bohachevsky test objective function.

Type minimization Range \(x_i \in [-100, 100]\) Global optima \(x_i = 0, \forall i \in \lbrace 1 \ldots N\rbrace\), \(f(\mathbf{x}) = 0\) Function \[f(\mathbf{x}) = \sum_{i=1}^{N-1}(x_i^2 + 2x_{i+1}^2 - 0.3\cos(3\pi x_i) - 0.4\cos(4\pi x_{i+1}) + 0.7)\](Source code, png, hires.png, pdf)

-

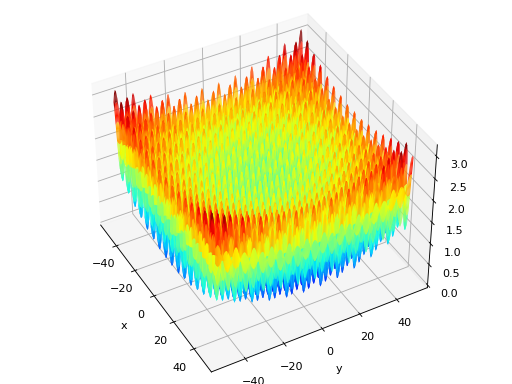

deap.benchmarks.griewank(individual)[source]¶ Griewank test objective function.

Type minimization Range \(x_i \in [-600, 600]\) Global optima \(x_i = 0, \forall i \in \lbrace 1 \ldots N\rbrace\), \(f(\mathbf{x}) = 0\) Function \[f(\mathbf{x}) = \frac{1}{4000}\sum_{i=1}^N\,x_i^2 - \prod_{i=1}^N\cos\left(\frac{x_i}{\sqrt{i}}\right) + 1\](Source code, png, hires.png, pdf)

-

deap.benchmarks.h1(individual)[source]¶ Simple two-dimensional function containing several local maxima. From: The Merits of a Parallel Genetic Algorithm in Solving Hard Optimization Problems, A. J. Knoek van Soest and L. J. R. Richard Casius, J. Biomech. Eng. 125, 141 (2003)

Type maximization Range \(x_i \in [-100, 100]\) Global optima \(\mathbf{x} = (8.6998, 6.7665)\), \(f(\mathbf{x}) = 2\)n Function \[f(\mathbf{x}) = \frac{\sin(x_1 - \frac{x_2}{8})^2 + \ \sin(x_2 + \frac{x_1}{8})^2}{\sqrt{(x_1 - 8.6998)^2 + \ (x_2 - 6.7665)^2} + 1}\](Source code, png, hires.png, pdf)

-

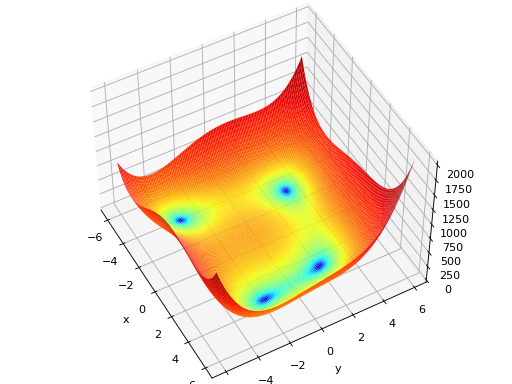

deap.benchmarks.himmelblau(individual)[source]¶ The Himmelblau’s function is multimodal with 4 defined minimums in \([-6, 6]^2\).

Type minimization Range \(x_i \in [-6, 6]\) Global optima \(\mathbf{x}_1 = (3.0, 2.0)\), \(f(\mathbf{x}_1) = 0\)n \(\mathbf{x}_2 = (-2.805118, 3.131312)\), \(f(\mathbf{x}_2) = 0\)n \(\mathbf{x}_3 = (-3.779310, -3.283186)\), \(f(\mathbf{x}_3) = 0\)n \(\mathbf{x}_4 = (3.584428, -1.848126)\), \(f(\mathbf{x}_4) = 0\)n Function \(f(x_1, x_2) = (x_1^2 + x_2 - 11)^2 + (x_1 + x_2^2 -7)^2\) (Source code, png, hires.png, pdf)

-

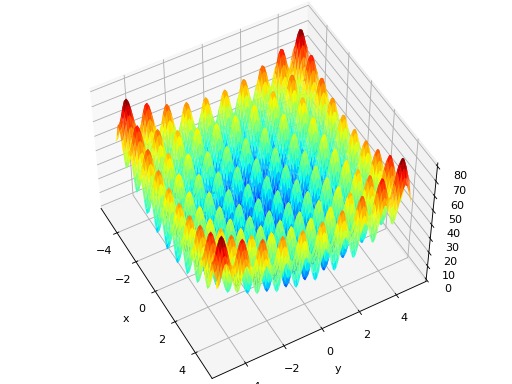

deap.benchmarks.rastrigin(individual)[source]¶ Rastrigin test objective function.

Type minimization Range \(x_i \in [-5.12, 5.12]\) Global optima \(x_i = 0, \forall i \in \lbrace 1 \ldots N\rbrace\), \(f(\mathbf{x}) = 0\) Function \(f(\mathbf{x}) = 10N + \sum_{i=1}^N x_i^2 - 10 \cos(2\pi x_i)\) (Source code, png, hires.png, pdf)

-

deap.benchmarks.rastrigin_scaled(individual)[source]¶ Scaled Rastrigin test objective function.

\[f_{\text{RastScaled}}(\mathbf{x}) = 10N + \sum_{i=1}^N \left(10^{\left(\frac{i-1}{N-1}\right)} x_i \right)^2 - 10\cos\left(2\pi 10^{\left(\frac{i-1}{N-1}\right)} x_i \right)`\]

-

deap.benchmarks.rastrigin_skew(individual)[source]¶ Skewed Rastrigin test objective function.

\[f_{\text{RastSkew}}(\mathbf{x}) = 10N + \sum_{i=1}^N \left(y_i^2 - 10 \cos(2\pi x_i)\right)\]\[\begin{split}\text{with } y_i = \begin{cases} 10\cdot x_i & \text{ if } x_i > 0,\\ \\ x_i & \text{ otherwise } \end{cases}\end{split}\]

-

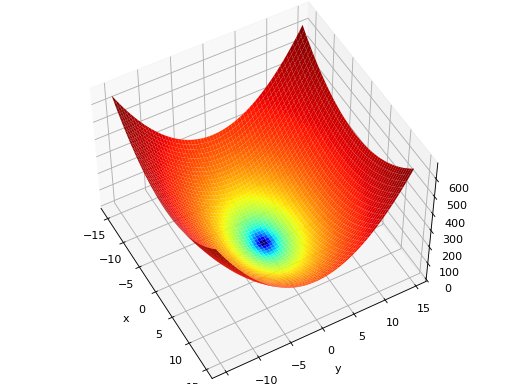

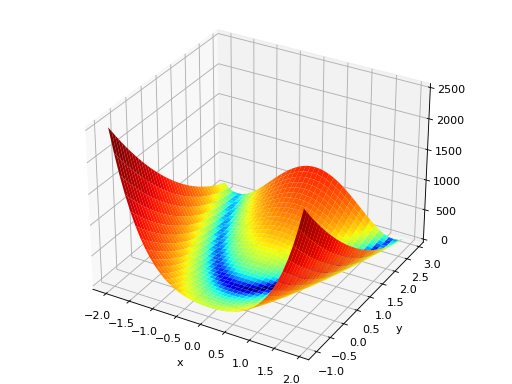

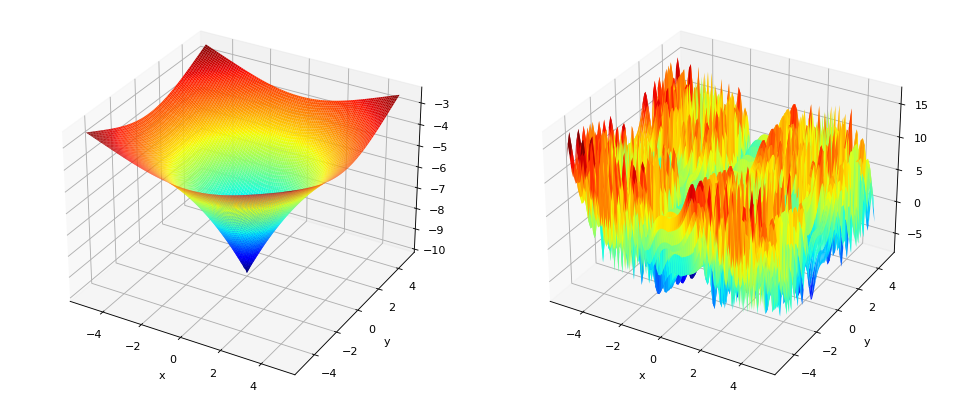

deap.benchmarks.rosenbrock(individual)[source]¶ Rosenbrock test objective function.

Type minimization Range none Global optima \(x_i = 1, \forall i \in \lbrace 1 \ldots N\rbrace\), \(f(\mathbf{x}) = 0\) Function \(f(\mathbf{x}) = \sum_{i=1}^{N-1} (1-x_i)^2 + 100 (x_{i+1} - x_i^2 )^2\) (Source code, png, hires.png, pdf)

-

deap.benchmarks.schaffer(individual)[source]¶ Schaffer test objective function.

Type minimization Range \(x_i \in [-100, 100]\) Global optima \(x_i = 0, \forall i \in \lbrace 1 \ldots N\rbrace\), \(f(\mathbf{x}) = 0\) Function \[f(\mathbf{x}) = \sum_{i=1}^{N-1} (x_i^2+x_{i+1}^2)^{0.25} \cdot \left[ \sin^2(50\cdot(x_i^2+x_{i+1}^2)^{0.10}) + 1.0 \right]\](Source code, png, hires.png, pdf)

-

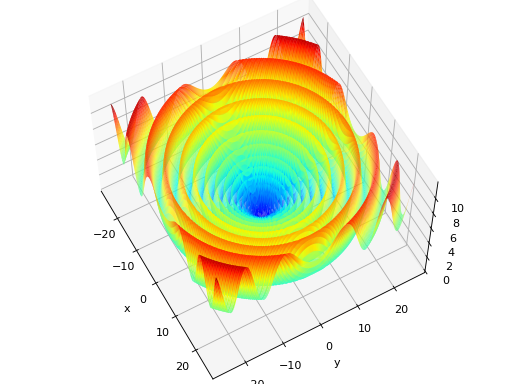

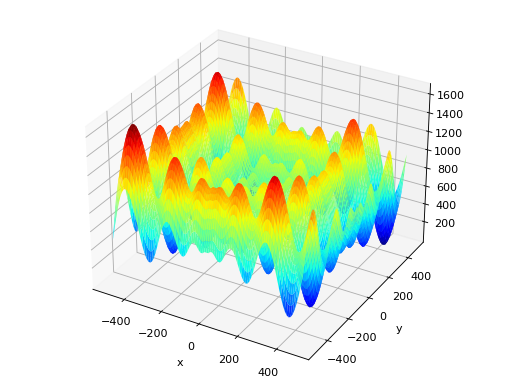

deap.benchmarks.schwefel(individual)[source]¶ Schwefel test objective function.

Type minimization Range \(x_i \in [-500, 500]\) Global optima \(x_i = 420.96874636, \forall i \in \lbrace 1 \ldots N\rbrace\), \(f(\mathbf{x}) = 0\) Function \[f(\mathbf{x}) = 418.9828872724339\cdot N - \sum_{i=1}^N\,x_i\sin\left(\sqrt{|x_i|}\right)\](Source code, png, hires.png, pdf)

-

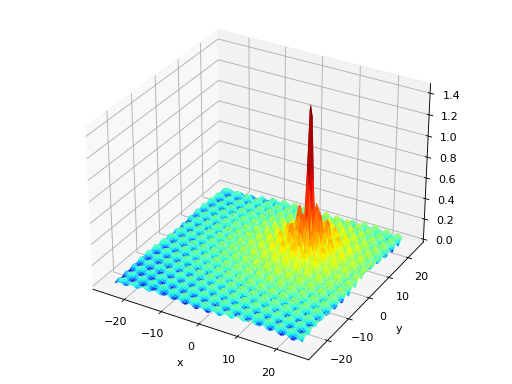

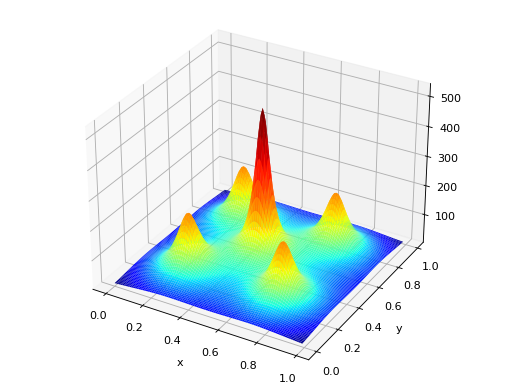

deap.benchmarks.shekel(individual, a, c)[source]¶ The Shekel multimodal function can have any number of maxima. The number of maxima is given by the length of any of the arguments a or c, a is a matrix of size \(M\times N\), where M is the number of maxima and N the number of dimensions and c is a \(M\times 1\) vector.

\(f_\text{Shekel}(\mathbf{x}) = \sum_{i = 1}^{M} \frac{1}{c_{i} + \sum_{j = 1}^{N} (x_{j} - a_{ij})^2 }\)

The following figure uses

\(\mathcal{A} = \begin{bmatrix} 0.5 & 0.5 \\ 0.25 & 0.25 \\ 0.25 & 0.75 \\ 0.75 & 0.25 \\ 0.75 & 0.75 \end{bmatrix}\) and \(\mathbf{c} = \begin{bmatrix} 0.002 \\ 0.005 \\ 0.005 \\ 0.005 \\ 0.005 \end{bmatrix}\), thus defining 5 maximums in \(\mathbb{R}^2\).

(Source code, png, hires.png, pdf)

Multi-objective¶

-

deap.benchmarks.fonseca(individual)[source]¶ Fonseca and Fleming’s multiobjective function. From: C. M. Fonseca and P. J. Fleming, “Multiobjective optimization and multiple constraint handling with evolutionary algorithms – Part II: Application example”, IEEE Transactions on Systems, Man and Cybernetics, 1998.

\(f_{\text{Fonseca}1}(\mathbf{x}) = 1 - e^{-\sum_{i=1}^{3}(x_i - \frac{1}{\sqrt{3}})^2}\)

\(f_{\text{Fonseca}2}(\mathbf{x}) = 1 - e^{-\sum_{i=1}^{3}(x_i + \frac{1}{\sqrt{3}})^2}\)

-

deap.benchmarks.kursawe(individual)[source]¶ Kursawe multiobjective function.

\(f_{\text{Kursawe}1}(\mathbf{x}) = \sum_{i=1}^{N-1} -10 e^{-0.2 \sqrt{x_i^2 + x_{i+1}^2} }\)

\(f_{\text{Kursawe}2}(\mathbf{x}) = \sum_{i=1}^{N} |x_i|^{0.8} + 5 \sin(x_i^3)\)

(Source code, png, hires.png, pdf)

-

deap.benchmarks.schaffer_mo(individual)[source]¶ Schaffer’s multiobjective function on a one attribute individual. From: J. D. Schaffer, “Multiple objective optimization with vector evaluated genetic algorithms”, in Proceedings of the First International Conference on Genetic Algorithms, 1987.

\(f_{\text{Schaffer}1}(\mathbf{x}) = x_1^2\)

\(f_{\text{Schaffer}2}(\mathbf{x}) = (x_1-2)^2\)

-

deap.benchmarks.dtlz1(individual, obj)[source]¶ DTLZ1 multiobjective function. It returns a tuple of obj values. The individual must have at least obj elements. From: K. Deb, L. Thiele, M. Laumanns and E. Zitzler. Scalable Multi-Objective Optimization Test Problems. CEC 2002, p. 825 - 830, IEEE Press, 2002.

\(g(\mathbf{x}_m) = 100\left(|\mathbf{x}_m| + \sum_{x_i \in \mathbf{x}_m}\left((x_i - 0.5)^2 - \cos(20\pi(x_i - 0.5))\right)\right)\)

\(f_{\text{DTLZ1}1}(\mathbf{x}) = \frac{1}{2} (1 + g(\mathbf{x}_m)) \prod_{i=1}^{m-1}x_i\)

\(f_{\text{DTLZ1}2}(\mathbf{x}) = \frac{1}{2} (1 + g(\mathbf{x}_m)) (1-x_{m-1}) \prod_{i=1}^{m-2}x_i\)

\(\ldots\)

\(f_{\text{DTLZ1}m-1}(\mathbf{x}) = \frac{1}{2} (1 + g(\mathbf{x}_m)) (1 - x_2) x_1\)

\(f_{\text{DTLZ1}m}(\mathbf{x}) = \frac{1}{2} (1 - x_1)(1 + g(\mathbf{x}_m))\)

Where \(m\) is the number of objectives and \(\mathbf{x}_m\) is a vector of the remaining attributes \([x_m~\ldots~x_n]\) of the individual in \(n > m\) dimensions.

-

deap.benchmarks.dtlz2(individual, obj)[source]¶ DTLZ2 multiobjective function. It returns a tuple of obj values. The individual must have at least obj elements. From: K. Deb, L. Thiele, M. Laumanns and E. Zitzler. Scalable Multi-Objective Optimization Test Problems. CEC 2002, p. 825 - 830, IEEE Press, 2002.

\(g(\mathbf{x}_m) = \sum_{x_i \in \mathbf{x}_m} (x_i - 0.5)^2\)

\(f_{\text{DTLZ2}1}(\mathbf{x}) = (1 + g(\mathbf{x}_m)) \prod_{i=1}^{m-1} \cos(0.5x_i\pi)\)

\(f_{\text{DTLZ2}2}(\mathbf{x}) = (1 + g(\mathbf{x}_m)) \sin(0.5x_{m-1}\pi ) \prod_{i=1}^{m-2} \cos(0.5x_i\pi)\)

\(\ldots\)

\(f_{\text{DTLZ2}m}(\mathbf{x}) = (1 + g(\mathbf{x}_m)) \sin(0.5x_{1}\pi )\)

Where \(m\) is the number of objectives and \(\mathbf{x}_m\) is a vector of the remaining attributes \([x_m~\ldots~x_n]\) of the individual in \(n > m\) dimensions.

-

deap.benchmarks.dtlz3(individual, obj)[source]¶ DTLZ3 multiobjective function. It returns a tuple of obj values. The individual must have at least obj elements. From: K. Deb, L. Thiele, M. Laumanns and E. Zitzler. Scalable Multi-Objective Optimization Test Problems. CEC 2002, p. 825 - 830, IEEE Press, 2002.

\(g(\mathbf{x}_m) = 100\left(|\mathbf{x}_m| + \sum_{x_i \in \mathbf{x}_m}\left((x_i - 0.5)^2 - \cos(20\pi(x_i - 0.5))\right)\right)\)

\(f_{\text{DTLZ3}1}(\mathbf{x}) = (1 + g(\mathbf{x}_m)) \prod_{i=1}^{m-1} \cos(0.5x_i\pi)\)

\(f_{\text{DTLZ3}2}(\mathbf{x}) = (1 + g(\mathbf{x}_m)) \sin(0.5x_{m-1}\pi ) \prod_{i=1}^{m-2} \cos(0.5x_i\pi)\)

\(\ldots\)

\(f_{\text{DTLZ3}m}(\mathbf{x}) = (1 + g(\mathbf{x}_m)) \sin(0.5x_{1}\pi )\)

Where \(m\) is the number of objectives and \(\mathbf{x}_m\) is a vector of the remaining attributes \([x_m~\ldots~x_n]\) of the individual in \(n > m\) dimensions.

-

deap.benchmarks.dtlz4(individual, obj, alpha)[source]¶ DTLZ4 multiobjective function. It returns a tuple of obj values. The individual must have at least obj elements. The alpha parameter allows for a meta-variable mapping in

dtlz2()\(x_i \rightarrow x_i^\alpha\), the authors suggest \(\alpha = 100\). From: K. Deb, L. Thiele, M. Laumanns and E. Zitzler. Scalable Multi-Objective Optimization Test Problems. CEC 2002, p. 825 - 830, IEEE Press, 2002.\(g(\mathbf{x}_m) = \sum_{x_i \in \mathbf{x}_m} (x_i - 0.5)^2\)

\(f_{\text{DTLZ4}1}(\mathbf{x}) = (1 + g(\mathbf{x}_m)) \prod_{i=1}^{m-1} \cos(0.5x_i^\alpha\pi)\)

\(f_{\text{DTLZ4}2}(\mathbf{x}) = (1 + g(\mathbf{x}_m)) \sin(0.5x_{m-1}^\alpha\pi ) \prod_{i=1}^{m-2} \cos(0.5x_i^\alpha\pi)\)

\(\ldots\)

\(f_{\text{DTLZ4}m}(\mathbf{x}) = (1 + g(\mathbf{x}_m)) \sin(0.5x_{1}^\alpha\pi )\)

Where \(m\) is the number of objectives and \(\mathbf{x}_m\) is a vector of the remaining attributes \([x_m~\ldots~x_n]\) of the individual in \(n > m\) dimensions.

-

deap.benchmarks.zdt1(individual)[source]¶ ZDT1 multiobjective function.

\(g(\mathbf{x}) = 1 + \frac{9}{n-1}\sum_{i=2}^n x_i\)

\(f_{\text{ZDT1}1}(\mathbf{x}) = x_1\)

\(f_{\text{ZDT1}2}(\mathbf{x}) = g(\mathbf{x})\left[1 - \sqrt{\frac{x_1}{g(\mathbf{x})}}\right]\)

-

deap.benchmarks.zdt2(individual)[source]¶ ZDT2 multiobjective function.

\(g(\mathbf{x}) = 1 + \frac{9}{n-1}\sum_{i=2}^n x_i\)

\(f_{\text{ZDT2}1}(\mathbf{x}) = x_1\)

\(f_{\text{ZDT2}2}(\mathbf{x}) = g(\mathbf{x})\left[1 - \left(\frac{x_1}{g(\mathbf{x})}\right)^2\right]\)

-

deap.benchmarks.zdt3(individual)[source]¶ ZDT3 multiobjective function.

\(g(\mathbf{x}) = 1 + \frac{9}{n-1}\sum_{i=2}^n x_i\)

\(f_{\text{ZDT3}1}(\mathbf{x}) = x_1\)

\(f_{\text{ZDT3}2}(\mathbf{x}) = g(\mathbf{x})\left[1 - \sqrt{\frac{x_1}{g(\mathbf{x})}} - \frac{x_1}{g(\mathbf{x})}\sin(10\pi x_1)\right]\)

-

deap.benchmarks.zdt4(individual)[source]¶ ZDT4 multiobjective function.

\(g(\mathbf{x}) = 1 + 10(n-1) + \sum_{i=2}^n \left[ x_i^2 - 10\cos(4\pi x_i) \right]\)

\(f_{\text{ZDT4}1}(\mathbf{x}) = x_1\)

\(f_{\text{ZDT4}2}(\mathbf{x}) = g(\mathbf{x})\left[ 1 - \sqrt{x_1/g(\mathbf{x})} \right]\)

-

deap.benchmarks.zdt6(individual)[source]¶ ZDT6 multiobjective function.

\(g(\mathbf{x}) = 1 + 9 \left[ \left(\sum_{i=2}^n x_i\right)/(n-1) \right]^{0.25}\)

\(f_{\text{ZDT6}1}(\mathbf{x}) = 1 - \exp(-4x_1)\sin^6(6\pi x_1)\)

\(f_{\text{ZDT6}2}(\mathbf{x}) = g(\mathbf{x}) \left[ 1 - (f_{\text{ZDT6}1}(\mathbf{x})/g(\mathbf{x}))^2 \right]\)

Binary Optimization¶

-

deap.benchmarks.binary.chuang_f1(individual)[source]¶ Binary deceptive function from : Multivariate Multi-Model Approach for Globally Multimodal Problems by Chung-Yao Chuang and Wen-Lian Hsu.

The function takes individual of 40+1 dimensions and has two global optima in [1,1,…,1] and [0,0,…,0].

-

deap.benchmarks.binary.chuang_f2(individual)[source]¶ Binary deceptive function from : Multivariate Multi-Model Approach for Globally Multimodal Problems by Chung-Yao Chuang and Wen-Lian Hsu.

The function takes individual of 40+1 dimensions and has four global optima in [1,1,…,0,0], [0,0,…,1,1], [1,1,…,1] and [0,0,…,0].

-

deap.benchmarks.binary.chuang_f3(individual)[source]¶ Binary deceptive function from : Multivariate Multi-Model Approach for Globally Multimodal Problems by Chung-Yao Chuang and Wen-Lian Hsu.

The function takes individual of 40+1 dimensions and has two global optima in [1,1,…,1] and [0,0,…,0].

-

deap.benchmarks.binary.royal_road1(individual, order)[source]¶ Royal Road Function R1 as presented by Melanie Mitchell in : “An introduction to Genetic Algorithms”.

Symbolic Regression¶

-

deap.benchmarks.gp.kotanchek(data)[source]¶ Kotanchek benchmark function.

Range \(\mathbf{x} \in [-1, 7]^2\) Function \(f(\mathbf{x}) = \\frac{e^{-(x_1 - 1)^2}}{3.2 + (x_2 - 2.5)^2}\)

-

deap.benchmarks.gp.salustowicz_1d(data)[source]¶ Salustowicz benchmark function.

Range \(x \in [0, 10]\) Function \(f(x) = e^{-x} x^3 \cos(x) \sin(x) (\cos(x) \sin^2(x) - 1)\)

-

deap.benchmarks.gp.salustowicz_2d(data)[source]¶ Salustowicz benchmark function.

Range \(\mathbf{x} \in [0, 7]^2\) Function \(f(\mathbf{x}) = e^{-x_1} x_1^3 \cos(x_1) \sin(x_1) (\cos(x_1) \sin^2(x_1) - 1) (x_2 -5)\)

-

deap.benchmarks.gp.unwrapped_ball(data)[source]¶ Unwrapped ball benchmark function.

Range \(\mathbf{x} \in [-2, 8]^n\) Function \(f(\mathbf{x}) = \\frac{10}{5 + \sum_{i=1}^n (x_i - 3)^2}\)

-

deap.benchmarks.gp.rational_polynomial(data)[source]¶ Rational polynomial ball benchmark function.

Range \(\mathbf{x} \in [0, 2]^3\) Function \(f(\mathbf{x}) = \\frac{30 * (x_1 - 1) (x_3 - 1)}{x_2^2 (x_1 - 10)}\)

-

deap.benchmarks.gp.rational_polynomial2(data)[source]¶ Rational polynomial benchmark function.

Range \(\mathbf{x} \in [0, 6]^2\) Function \(f(\mathbf{x}) = \\frac{(x_1 - 3)^4 + (x_2 - 3)^3 - (x_2 - 3)}{(x_2 - 2)^4 + 10}\)

Moving Peaks Benchmark¶

Re-implementation of the Moving Peaks Benchmark by Jurgen Branke. With the addition of the fluctuating number of peaks presented in du Plessis and Engelbrecht, 2013, Self-Adaptive Environment with Fluctuating Number of Optima.

-

class

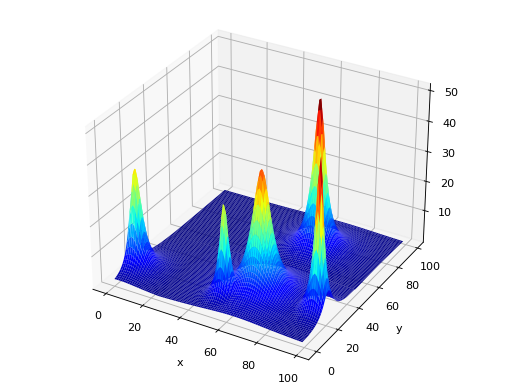

deap.benchmarks.movingpeaks.MovingPeaks(self, dim[, pfunc][, npeaks][, bfunc][, random][, ...])[source]¶ The Moving Peaks Benchmark is a fitness function changing over time. It consists of a number of peaks, changing in height, width and location. The peaks function is given by pfunc, which is either a function object or a list of function objects (the default is

function1()). The number of peaks is determined by npeaks (which defaults to 5). This parameter can be either a integer or a sequence. If it is set to an integer the number of peaks won’t change, while if set to a sequence of 3 elements, the number of peaks will fluctuate between the first and third element of that sequence, the second element is the initial number of peaks. When fluctuating the number of peaks, the parameter number_severity must be included, it represents the number of peak fraction that is allowed to change. The dimensionality of the search domain is dim. A basis function bfunc can also be given to act as static landscape (the default is no basis function). The argument random serves to grant an independent random number generator to the moving peaks so that the evolution is not influenced by number drawn by this object (the default uses random functions from the Python modulerandom). Various other keyword parameters listed in the table below are required to setup the benchmark, default parameters are based on scenario 1 of this benchmark.Parameter SCENARIO_1(Default)SCENARIO_2SCENARIO_3Details pfuncfunction1()cone()cone()The peak function or a list of peak function. npeaks5 10 50 Number of peaks. If an integer, the number of peaks won’t change, if a sequence it will fluctuate [min, current, max]. bfuncNoneNonelambda x: 10Basis static function. min_coord0.0 0.0 0.0 Minimum coordinate for the centre of the peaks. max_coord100.0 100.0 100.0 Maximum coordinate for the centre of the peaks. min_height30.0 30.0 30.0 Minimum height of the peaks. max_height70.0 70.0 70.0 Maximum height of the peaks. uniform_height50.0 50.0 0 Starting height for all peaks, if uniform_height <= 0the initial height is set randomly for each peak.min_width0.0001 1.0 1.0 Minimum width of the peaks. max_width0.2 12.0 12.0 Maximum width of the peaks uniform_width0.1 0 0 Starting width for all peaks, if uniform_width <= 0the initial width is set randomly for each peak.lambda_0.0 0.5 0.5 Correlation between changes. move_severity1.0 1.5 1.0 The distance a single peak moves when peaks change. height_severity7.0 7.0 1.0 The standard deviation of the change made to the height of a peak when peaks change. width_severity0.01 1.0 0.5 The standard deviation of the change made to the width of a peak when peaks change. period5000 5000 1000 Period between two changes. Dictionaries

SCENARIO_1,SCENARIO_2andSCENARIO_3of this module define the defaults for these parameters. The scenario 3 requires a constant basis function which can be given as a lambda functionlambda x: constant.The following shows an example of scenario 1 with non uniform heights and widths.

(Source code, png, hires.png, pdf)

Benchmarks tools¶

Module containing tools that are useful when benchmarking algorithms

-

deap.benchmarks.tools.convergence(first_front, optimal_front)[source]¶ Given a Pareto front first_front and the optimal Pareto front, this function returns a metric of convergence of the front as explained in the original NSGA-II article by K. Deb. The smaller the value is, the closer the front is to the optimal one.

-

deap.benchmarks.tools.diversity(first_front, first, last)[source]¶ Given a Pareto front first_front and the two extreme points of the optimal Pareto front, this function returns a metric of the diversity of the front as explained in the original NSGA-II article by K. Deb. The smaller the value is, the better the front is.

-

deap.benchmarks.tools.noise(noise)[source]¶ Decorator for evaluation functions, it evaluates the objective function and adds noise by calling the function(s) provided in the noise argument. The noise functions are called without any argument, consider using the

Toolboxor Python’sfunctools.partial()to provide any required argument. If a single function is provided it is applied to all objectives of the evaluation function. If a list of noise functions is provided, it must be of length equal to the number of objectives. The noise argument also acceptNone, which will leave the objective without noise.This decorator adds a

noise()method to the decorated function.-

noise.noise(noise)[source]¶ Set the current noise to noise. After decorating the evaluation function, this function will be available directly from the function object.

prand = functools.partial(random.gauss, mu=0.0, sigma=1.0) @noise(prand) def evaluate(individual): return sum(individual), # This will remove noise from the evaluation function evaluate.noise(None)

-

-

deap.benchmarks.tools.rotate(matrix)[source]¶ Decorator for evaluation functions, it rotates the objective function by matrix which should be a valid orthogonal NxN rotation matrix, with N the length of an individual. When called the decorated function should take as first argument the individual to be evaluated. The inverse rotation matrix is actually applied to the individual and the resulting list is given to the evaluation function. Thus, the evaluation function shall not be expecting an individual as it will receive a plain list (numpy.array). The multiplication is done using numpy.

This decorator adds a

rotate()method to the decorated function.Note

A random orthogonal matrix Q can be created via QR decomposition.

A = numpy.random.random((n,n)) Q, _ = numpy.linalg.qr(A)

-

rotate.rotate(matrix)[source]¶ Set the current rotation to matrix. After decorating the evaluation function, this function will be available directly from the function object.

# Create a random orthogonal matrix A = numpy.random.random((n,n)) Q, _ = numpy.linalg.qr(A) @rotate(Q) def evaluate(individual): return sum(individual), # This will reset rotation to identity evaluate.rotate(numpy.identity(n))

-

-

deap.benchmarks.tools.scale(factor)[source]¶ Decorator for evaluation functions, it scales the objective function by factor which should be the same length as the individual size. When called the decorated function should take as first argument the individual to be evaluated. The inverse factor vector is actually applied to the individual and the resulting list is given to the evaluation function. Thus, the evaluation function shall not be expecting an individual as it will receive a plain list.

This decorator adds a

scale()method to the decorated function.-

scale.scale(factor)[source]¶ Set the current scale to factor. After decorating the evaluation function, this function will be available directly from the function object.

@scale([0.25, 2.0, ..., 0.1]) def evaluate(individual): return sum(individual), # This will cancel the scaling evaluate.scale([1.0, 1.0, ..., 1.0])

-

-

deap.benchmarks.tools.translate(vector)[source]¶ Decorator for evaluation functions, it translates the objective function by vector which should be the same length as the individual size. When called the decorated function should take as first argument the individual to be evaluated. The inverse translation vector is actually applied to the individual and the resulting list is given to the evaluation function. Thus, the evaluation function shall not be expecting an individual as it will receive a plain list.

This decorator adds a

translate()method to the decorated function.-

translate.translate(vector)[source]¶ Set the current translation to vector. After decorating the evaluation function, this function will be available directly from the function object.

@translate([0.25, 0.5, ..., 0.1]) def evaluate(individual): return sum(individual), # This will cancel the translation evaluate.translate([0.0, 0.0, ..., 0.0])

-